Autonomous Driving

Autonomous Optimal Car (AO-Car) is a research project between 09/01/2016 and 03/31/2018 about autonomous driving and parking of electrical cars in a parking area using multi-sensor information, previous knowledge about current vehicle state and environment. The involved working groups are 'Zentrum für Technomathematik / AG Optimierung und Optimale Steuerung' (Prof. Dr. Christoph Büskens), 'Kognitive Neuroinformatik' (Prof. Dr. rer. hum. biol. Kerstin Schill), and 'Computergrafik und Virtuelle Realität' (Prof. Dr. Gabriel Zachmann) of the University of Bremen, as well as 'LRT 9.3 Institut für Raumfahrttechnik und Weltraumnutzung' (Prof. Dr.-Ing. habil. Bernd Eissfeller), and 'LRT 9.1 Institut für Raumfahrttechnik und Weltraumnutzung' (Prof. Dr.-Ing. habil. Roger Förster) of the Bundeswehr University Munich. This project was funded by the German aerospace center (50NA1615). The successor project is called OPA3L.

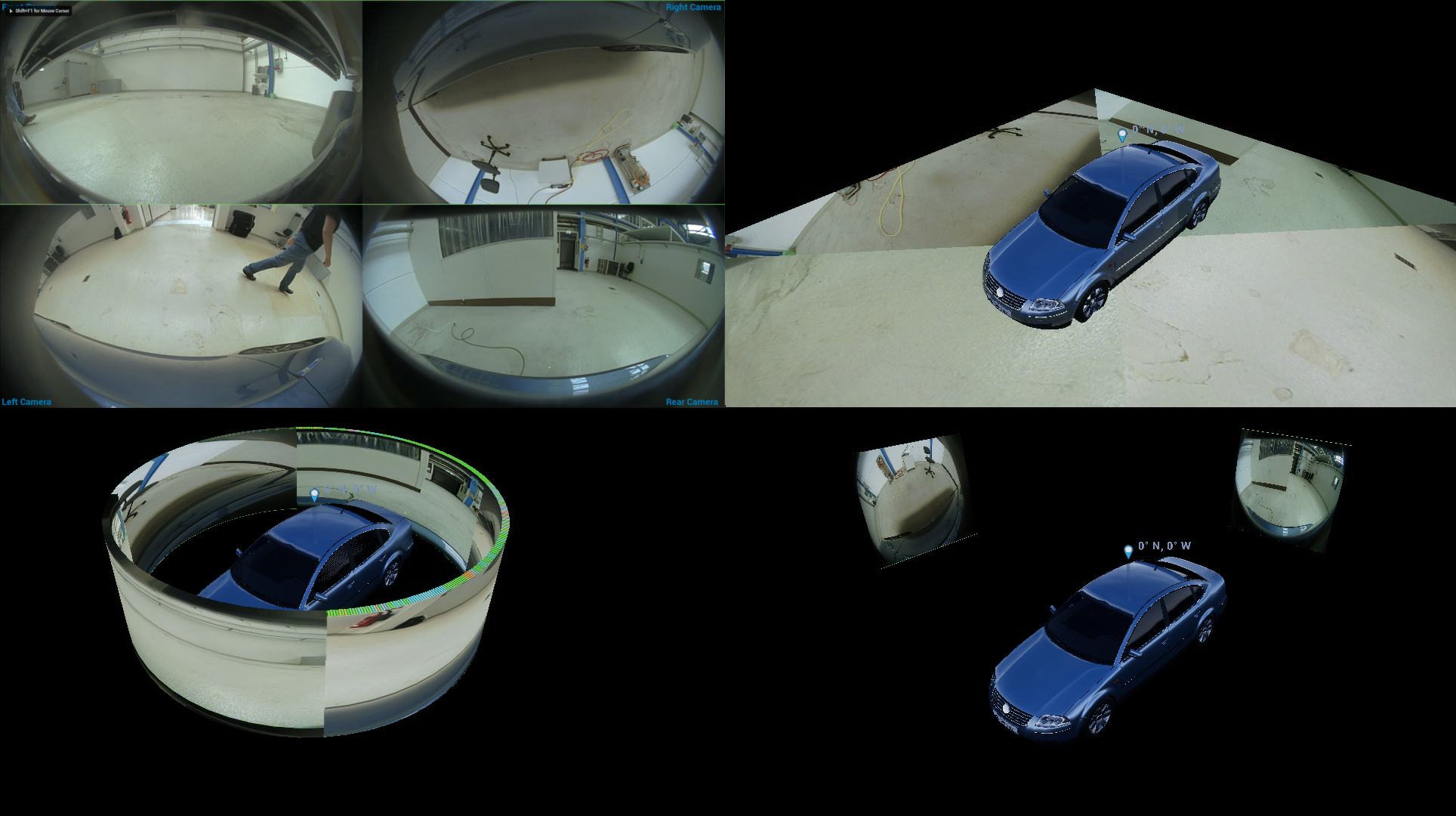

Out task's were to visualize the sensor data to validate and demonstrate and to calculate and visualize the minimum-distance to nearby objects. The car provides data from 2 LiDAR scanners, front and read center, 12 ultra-sonic-sensors, interial data, GPS, and other intrinsics via CAN. A PC in the trunk collects and processes the data using 'The Automotive Data and Time-Triggered Framework' (ADTF) 2.