Ray-Marching-Based Volume Rendering of Computed Tomography Data in a Game Engine

In this thesis, a direct volume renderer was developed and integrated into the Unreal Engine 4 allowing real-time rendering of volumetric medical data, e.g. computed tomography (CT) data, within arbitrary polygonal scenes. The focus of the implementation was to minimize the computational time while at the same time achieve a high-quality visualization.

Description

In recent years the interest in Virtual- and Augmented Reality for medical applications surged. Those applications are mostly based on 3D graphics engines designed for polygonal rendering, like Unity3D or Epic's Unreal Engine. However, medical data (e.g. CT data) is usually visualized using volumetric rendering which is rarely supported by those engines. This thesis aims at combining the two approaches by integrating a direct volume renderer specifically designed for CT datasets into the Unreal Engine 4. The overall pipeline consists of two stages: data preprocessing, in which DICOM input data gets parsed and transformed and several precomputations are made, and the volume renderer itself. The volume renderer is ray-marching-based and implemented using shaders, therefore running exclusively on the GPU which makes it real-time capable. The computational times are further reduced by a number of optimization techniques, for example, a per-dataset precomputed octree as a spatial acceleration data structure. As the goal is not only a quick computation but also a high-quality visualization of the data, multiple advanced lighting models and several artifact-minimizing techniques like jittering, pre-integration and supersampling are employed. The illumination is computed dynamically based on the lights (currently up to two) placed in the scene.

Results

In this thesis, a direct volume renderer was successfully integrated into the Unreal Engine 4 which enables the real-time visualization of CT data embedded into 3D scenes. The implemented volume renderer is evaluated regarding performance and the achieved visual quality. The latter is subjectively rated and compared to existing open-source visualization tools. In addition, the effectiveness of several illumination- and optimization techniques is evaluated specifically.

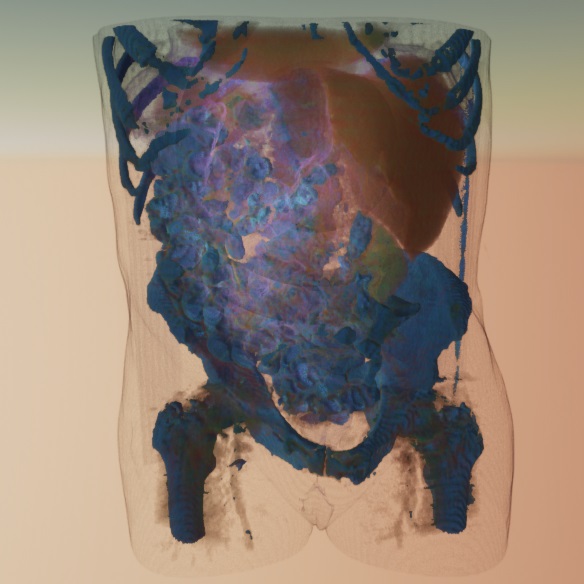

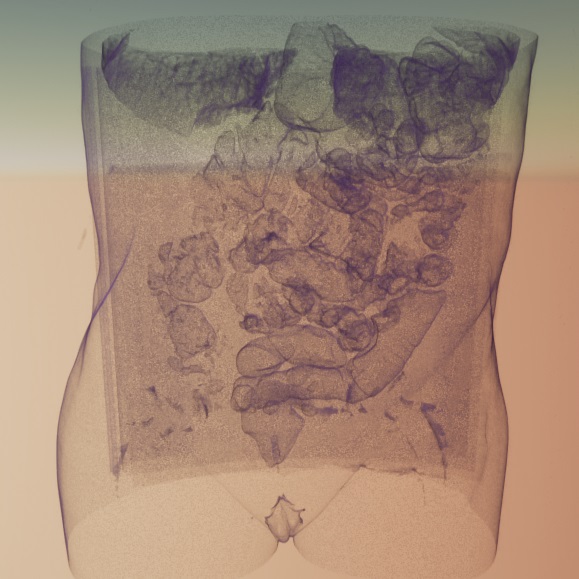

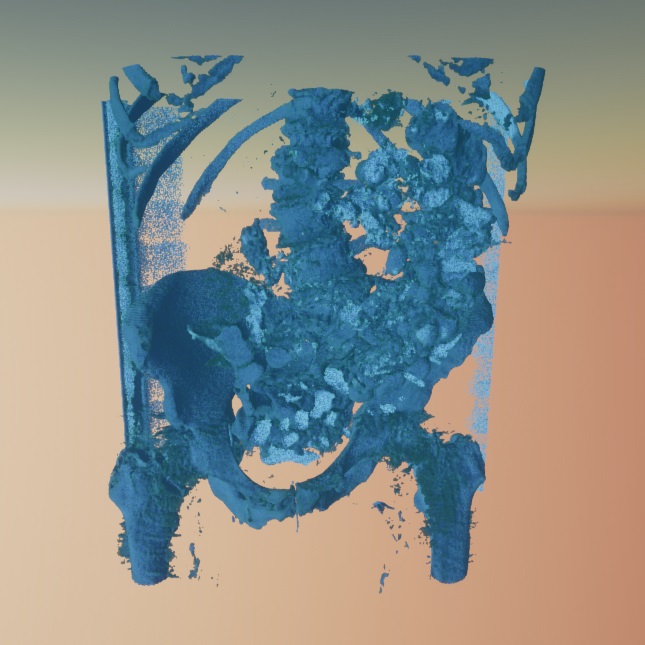

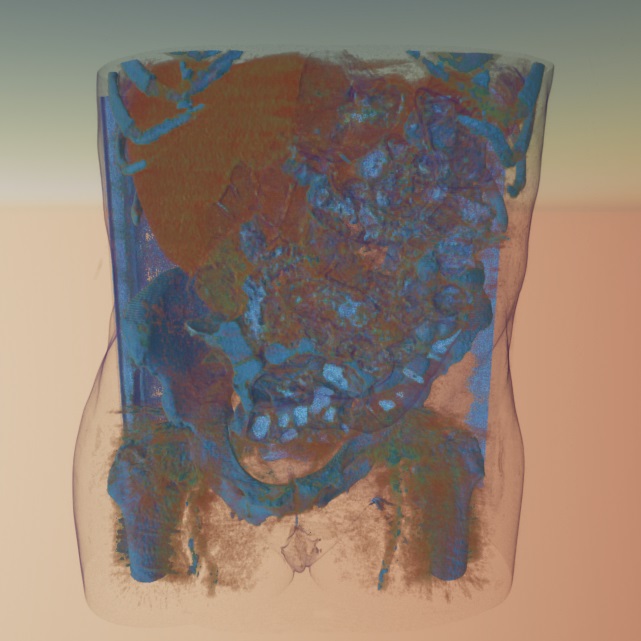

The following three pictures show the volume renderer applied to a CT dataset using different transfer functions, from left to right: "lung+skin", "bone", and "all" which includes also soft tissue.

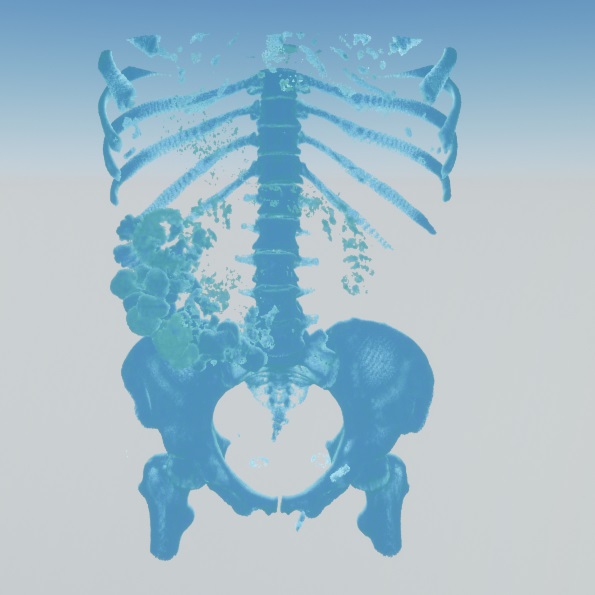

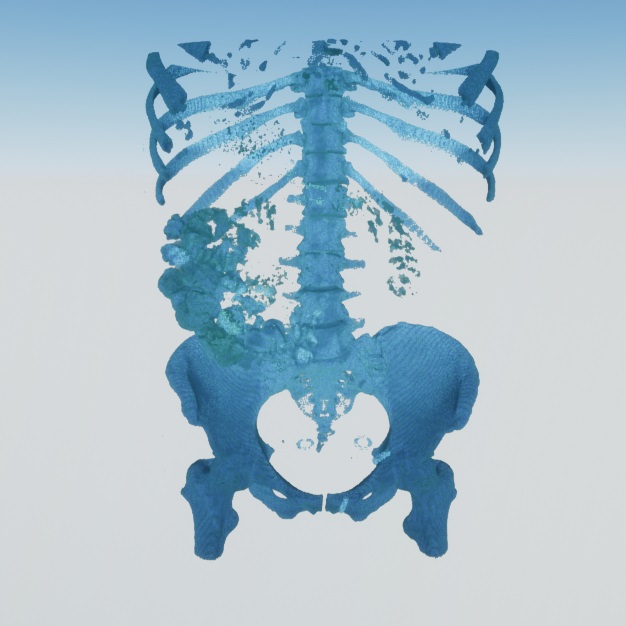

The pictures below depict the volume renderer using different illumination methods, from left to right: shadow maps, the Blinn-Phong lighting model, Blinn-Phong combined with local ambient occlusion. Using local ambient occlusion enhances the depth perception by superior shadowing.

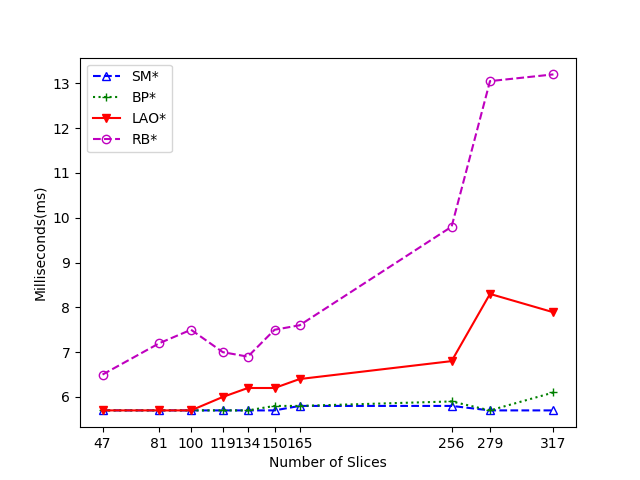

The performance of the volume renderer is shown in the following diagram. Measurements were taken for all the different lighting models over multiple datasets with different amounts of slices. "SM" stands for the shadow mapping technique, "BP" for Blinn-Phong lighting, "LAO" for Blinn-Phong with local ambient occlusion and "RB" for a volume rendering integration into the Unreal Engine by Ryan Brucks which this work is based on. More complex lighting increases the computational time, however, even the most advanced variant is still significantly faster than the previously existing renderer and capable of achieving high frame rates suitable for VR.

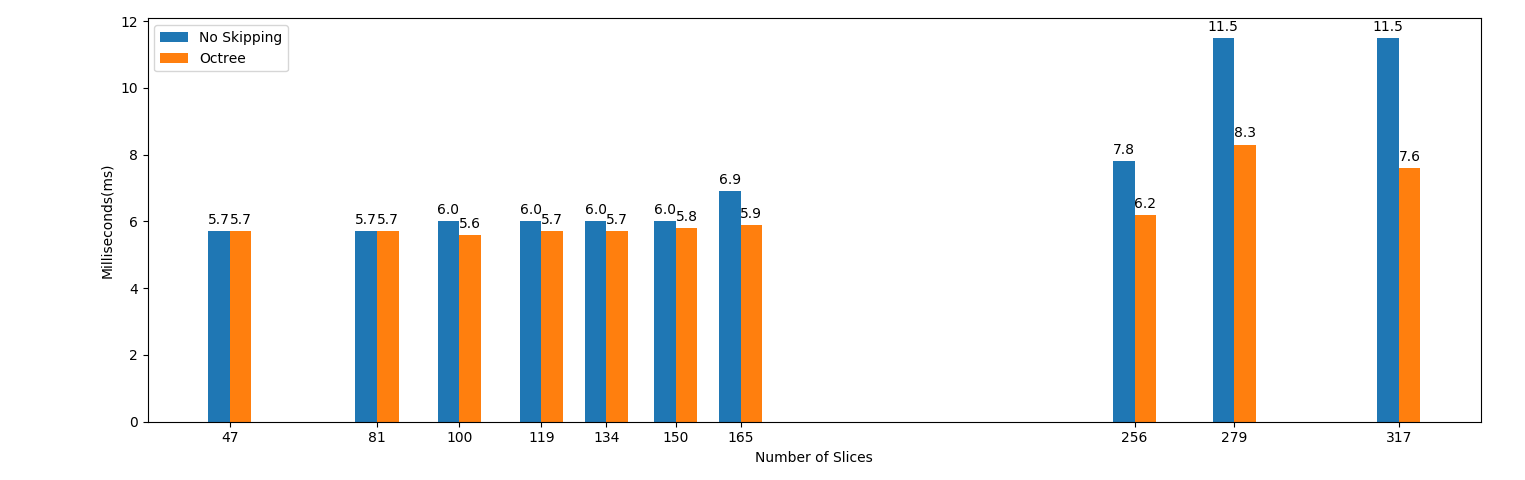

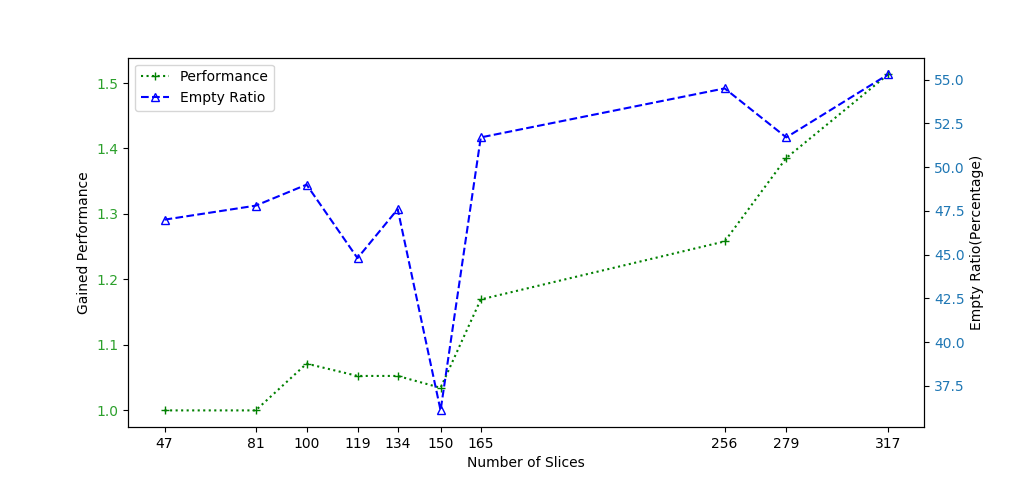

The following two diagrams show the performance impact of the octree over different datasets with a varying number of slices. In the second diagram, the performance gain is additionally compared to the ratio of empty space of the volume. The octree is most beneficial with a high number of slices.

Files

Full version of the master's thesis (English only)

Here is a movie that shows the volume renderer visualizing CT data in real-time using different configurations:

License

This original work is copyright by University of Bremen.

Any software of this work is covered by the European Union Public Licence v1.2.

To view a copy of this license, visit

eur-lex.europa.eu.

The Thesis provided above (as PDF file) is licensed under Attribution-NonCommercial-NoDerivatives 4.0 International.

Any other assets (3D models, movies, documents, etc.) are covered by the

Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

To view a copy of this license, visit

creativecommons.org.

If you use any of the assets or software to produce a publication,

then you must give credit and put a reference in your publication.

If you would like to use our software in proprietary software,

you can obtain an exception from the above license (aka. dual licensing).

Please contact zach at cs.uni-bremen dot de.