Social Panoramas: Sharing Experiences Using a Head Mounted Display.

The Effect of Presence and Awareness in a Remote Collaborative Environment

This work explores the concept of Social Panoramas, present a working prototype, and results from two user studies that explore how panorama images, Mixed Reality, and wearable computers can be used to support remote collaboration.

This project was a collaboration between the computer graphics group of the University of Bremen and the Human Interface Technology Laboratory in New Zealand.

Introduction

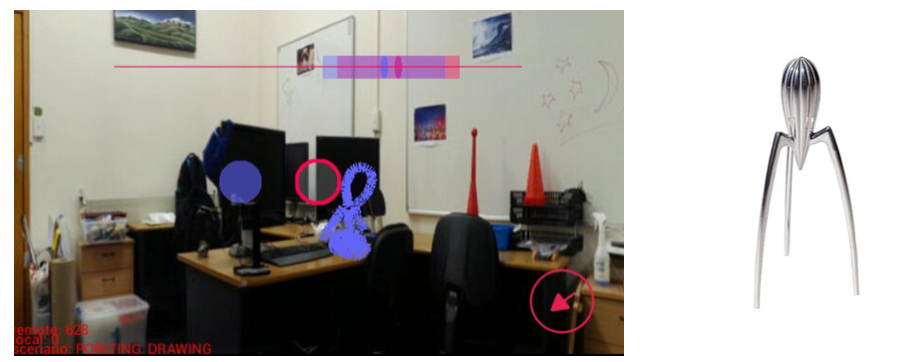

Applications creating and sharing panoramas on mobile devices have recently gained in popularity. However interaction with these panoramas rarely exceeds viewing and sharing a still image. In this work a prototype was developed that allows panorama images to be shared between a Google Glass user and a remote tablet user. This simulates the example of the Glass user capturing a panorama of his or her surrounding space and sharing it with a remote collaborator in real time. Additionally, a variety of cues were introduced on the panorama for supporting awareness, and enabling both users to share pointing and drawing cues for enhanced collaboration. Two studies were conducted to explore if these cues can enhance awareness and increase Social Presence. Some of the results are that visual cues significantly enhance awareness compared to audio only, and that Glass users prefer pointing over drawing for shared interaction.

The Prototype

In order to explore how panoramas and wearable computing can be used to support real-time collaboration a prototype system was created that connected a person using Google Glass with a second user on an Android tablet.

Due to the limited processing power of Google Glass it is assumed that the panorama has been captured and the focus lies on supporting remote collaboration and interaction. The program was developed in Processing with the Ketai library. Processing builds the code into Android APK file which is then pushed to run on the target device; Glass or tablet.

User Studies

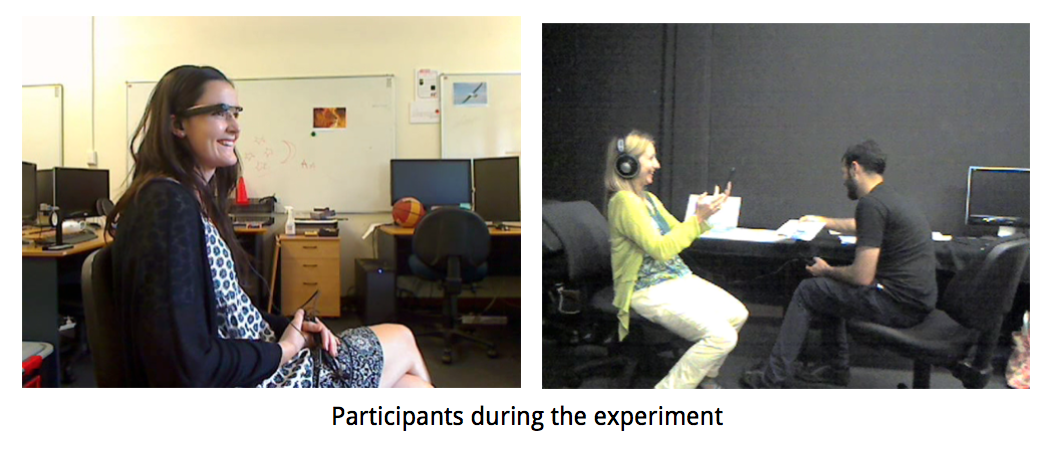

Two studies were conducted using quantitative and qualitative methods. Both studies were designed to simulate real-time collaboration between a Glass and tablet user and was set in an office environment. Participants were placed in different rooms to enforce the aspect of remote collaboration. The first experiment described a search task. Each user had to pick an item in the panorama and let the other user look for it by providing hints in order to test the best visual cues for awareness in a remote collaborative environment. The second experiment involved several interaction methods such as pointing and drawing inside the panorama to evaluate the best way of interaction and its impact on presence. In each test 24 subjects were divided in groups of two with gender equally distributed to avoid biases.

Results

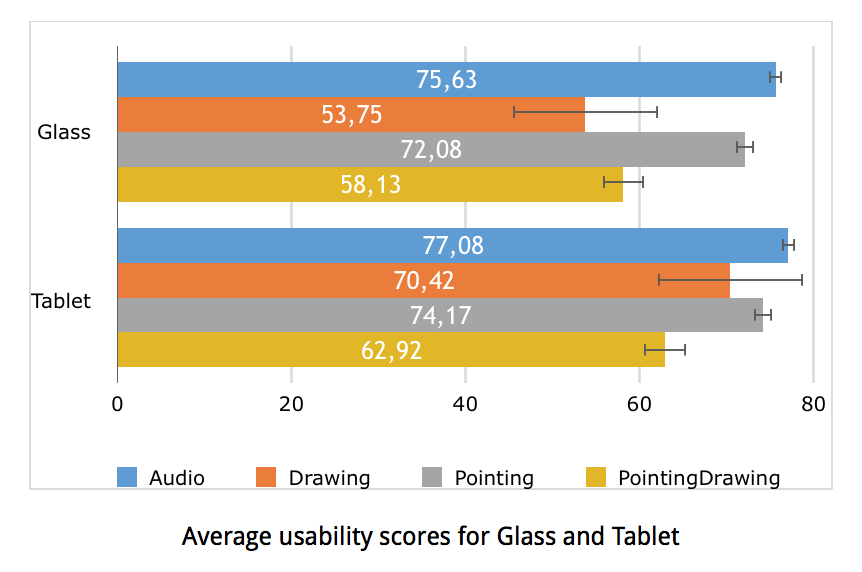

The first experiment has shown that visual cues can significantly enhance social awareness when sharing a Social Panorama space, compared to using audio only. Users were able to use the visual cues to quickly follow each other around the social space and establish common ground, which resulted in the feeling that they could collaborate better. However, it was found that there was no difference between the visual cues used. The second experiment showed that adding interaction tools did not increase the Social Presence for tablet users. On the Glass device, the drawing condition actually decreased presence compared to other conditions. Glass users found the drawing condition to be very difficult to use, suggesting that if a Social Panorama interface is not easy to use it will have a negative impact on Social Presence. Additionally, users were observed using different strategies to describe an object: Some would try to draw very specific forms and shapes, while most others would rely on basic geometric shapes to get their idea across.

Files

Full version of Master's Thesis: Social Panoramas: Sharing Experiences Using a Head Mounted Display

This movie presents the concept of Social Panoramas:

License

This original work is copyright by University of Bremen.

Any software of this work is covered by the European Union Public Licence v1.2.

To view a copy of this license, visit

eur-lex.europa.eu.

The Thesis provided above (as PDF file) is licensed under Attribution-NonCommercial-NoDerivatives 4.0 International.

Any other assets (3D models, movies, documents, etc.) are covered by the

Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

To view a copy of this license, visit

creativecommons.org.

If you use any of the assets or software to produce a publication,

then you must give credit and put a reference in your publication.

If you would like to use our software in proprietary software,

you can obtain an exception from the above license (aka. dual licensing).

Please contact zach at cs.uni-bremen dot de.