Overview

The Virtual Cooking project has been in existence since 2019 and aims to teach robots to grasp

like humans so they can assist in cooking.

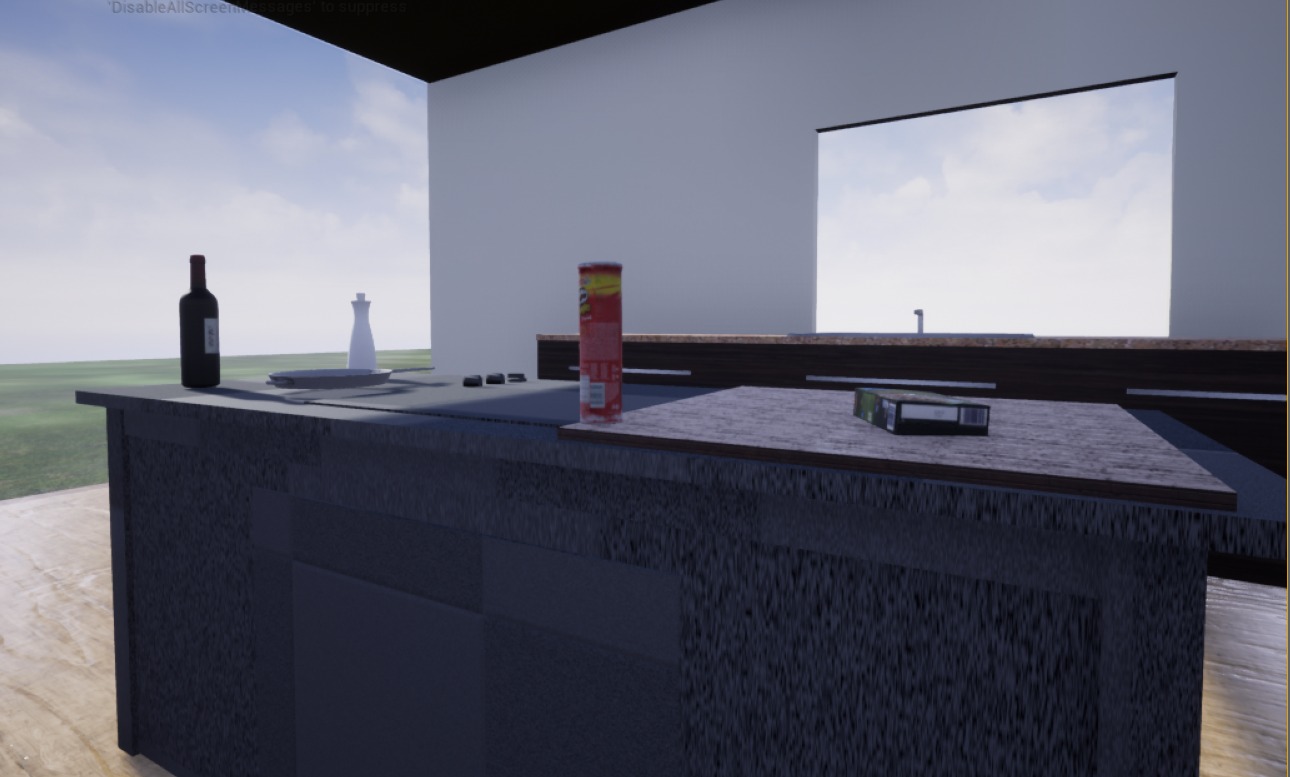

The work of this project focuses on adding new objects to a virtual kitchen environment and

analyzing different grasp types in it,

considering where the objects were picked and how much force was applied. The way of grasping in

the virtual environment will also be

compared to how grasping works in real life.

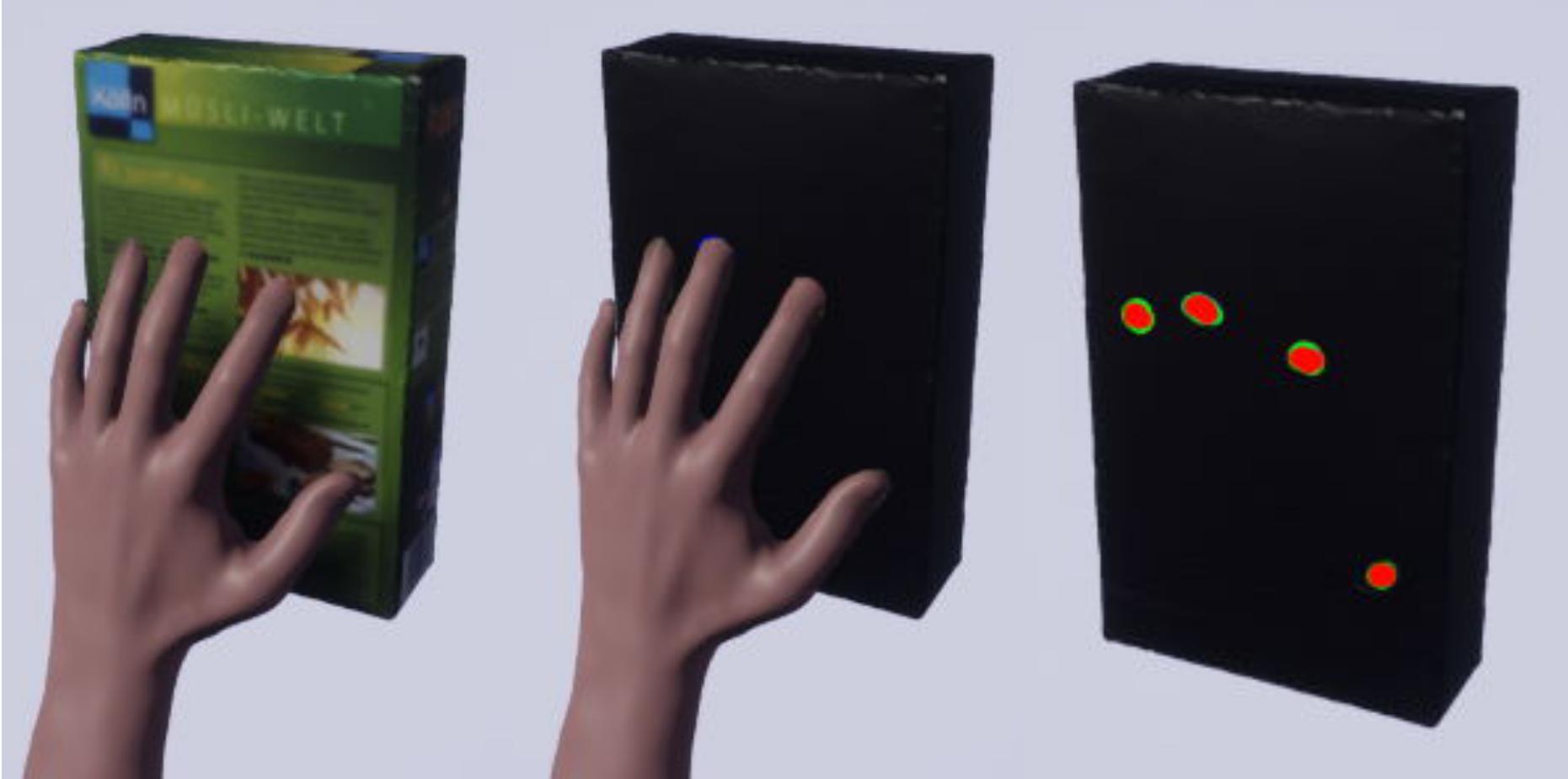

We use Cybergloves and the OptiTrack tracking system

to record human grasping movements in virtual reality. To display the collision between the data

gloves and the objects,

heat maps are generated and stored in a database.

In general, we use the Unreal

Engine for 3D representation and

Meshroom for 3D modelling

of the objects.